26 NVIDIA GPUs on SLE Micro #

26.1 Intro #

This guide demonstrates how to implement host-level NVIDIA GPU support via the pre-built open-source drivers on SLE Micro 6.0. These are drivers that are baked into the operating system rather than dynamically loaded by NVIDIA’s GPU Operator. This configuration is highly desirable for customers that want to pre-bake all artifacts required for deployment into the image, and where the dynamic selection of the driver version, that is, the user selecting the version of the driver via Kubernetes, is not a requirement. This guide initially explains how to deploy the additional components onto a system that has already been pre-deployed, but follows with a section that describes how to embed this configuration into the initial deployment via Edge Image Builder. If you do not want to run through the basics and set things up manually, skip right ahead to that section.

It is important to call out that the support for these drivers is provided by both SUSE and NVIDIA in tight collaboration, where the driver is built and shipped by SUSE as part of the package repositories. However, if you have any concerns or questions about the combination in which you use the drivers, ask your SUSE or NVIDIA account managers for further assistance. If you plan to use NVIDIA AI Enterprise (NVAIE), ensure that you are using an NVAIE certified GPU, which may require the use of proprietary NVIDIA drivers. If you are unsure, speak with your NVIDIA representative.

Further information about NVIDIA GPU operator integration is not covered in this guide. While integrating the NVIDIA GPU Operator for Kubernetes is not covered here, you can still follow most of the steps in this guide to set up the underlying operating system and simply enable the GPU operator to use the pre-installed drivers via the driver.enabled=false flag in the NVIDIA GPU Operator Helm chart, where it will simply pick up the installed drivers on the host. More comprehensive instructions are available from NVIDIA here. SUSE recently also made a Technical Reference Document (TRD) available that discusses how to use the GPU operator and the NVIDIA proprietary drivers, should this be a requirement for your use case.

26.2 Prerequisites #

If you are following this guide, it assumes that you have the following already available:

At least one host with SLE Micro 6.0 installed; this can be physical or virtual.

Your hosts are attached to a subscription as this is required for package access — an evaluation is available here.

A compatible NVIDIA GPU installed (or fully passed through to the virtual machine in which SLE Micro is running).

Access to the root user — these instructions assume you are the root user, and not escalating your privileges via

sudo.

26.3 Manual installation #

In this section, you are going to install the NVIDIA drivers directly onto the SLE Micro operating system as the NVIDIA open-driver is now part of the core SLE Micro package repositories, which makes it as easy as installing the required RPM packages. There is no compilation or downloading of executable packages required. Below we walk through deploying the "G06" generation of driver, which supports the latest GPUs (see here for further information), so select an appropriate driver generation for the NVIDIA GPU that your system has. For modern GPUs, the "G06" driver is the most common choice.

Before we begin, it is important to recognize that besides the NVIDIA open-driver that SUSE ships as part of SLE Micro, you might also need additional NVIDIA components for your setup. These could include OpenGL libraries, CUDA toolkits, command-line utilities such as nvidia-smi, and container-integration components such as nvidia-container-toolkit. Many of these components are not shipped by SUSE as they are proprietary NVIDIA software, or it makes no sense for us to ship them instead of NVIDIA. Therefore, as part of the instructions, we are going to configure additional repositories that give us access to said components and walk through certain examples of how to use these tools, resulting in a fully functional system. It is important to distinguish between SUSE repositories and NVIDIA repositories, as occasionally there can be a mismatch between the package versions that NVIDIA makes available versus what SUSE has built. This usually arises when SUSE makes a new version of the open-driver available, and it takes a couple of days before the equivalent packages are made available in NVIDIA repositories to match.

We recommend that you ensure that the driver version that you are selecting is compatible with your GPU and meets any CUDA requirements that you may have by checking:

The driver version that you plan on deploying has a matching version in the NVIDIA repository and ensuring that you have equivalent package versions for the supporting components available

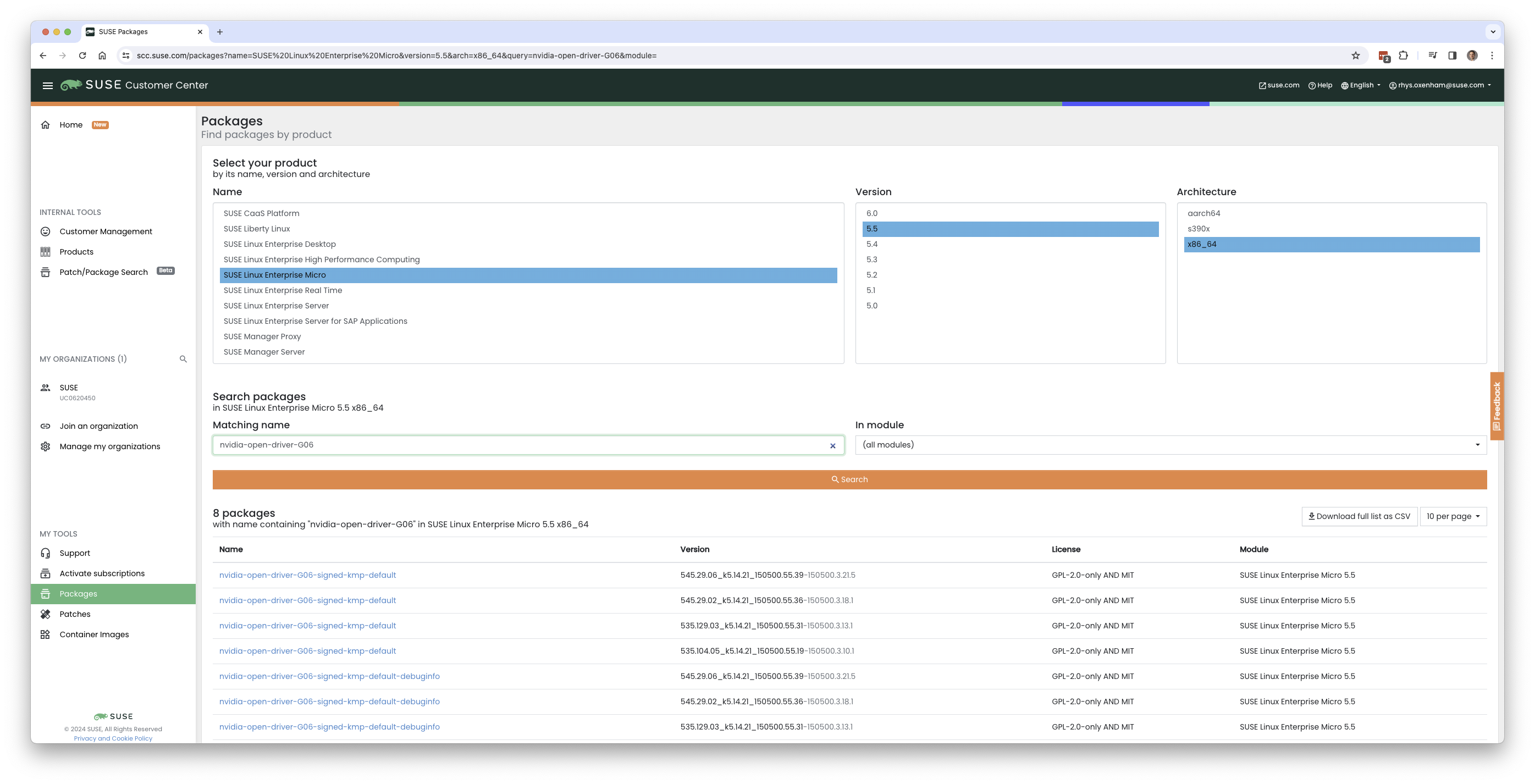

To find the NVIDIA open-driver versions, either run zypper se -s nvidia-open-driver on the target machine or search the SUSE Customer Center for the "nvidia-open-driver" in SLE Micro 6.0 for x86_64.

When you have confirmed that an equivalent version is available in the NVIDIA repos, you are ready to install the packages on the host operating system. For this, we need to open up a transactional-update session, which creates a new read/write snapshot of the underlying operating system so we can make changes to the immutable platform (for further instructions on transactional-update, see here):

transactional-update shell

When you are in your transactional-update shell, add an additional package repository from NVIDIA. This allows us to pull in additional utilities, for example, nvidia-smi:

zypper ar https://download.nvidia.com/suse/sle15sp6/ nvidia-suse-main zypper --gpg-auto-import-keys refresh

You can then install the driver and nvidia-compute-utils for additional utilities. If you do not need the utilities, you can omit it, but for testing purposes, it is worth installing at this stage:

zypper install -y --auto-agree-with-licenses nvidia-open-driver-G06-signed-kmp nvidia-compute-utils-G06

If the installation fails, this might indicate a dependency mismatch between the selected driver version and what NVIDIA ships in their repositories. Refer to the previous section to verify that your versions match. Attempt to install a different driver version. For example, if the NVIDIA repositories have an earlier version, you can try specifying nvidia-open-driver-G06-signed-kmp=550.54.14 on your install command to specify a version that aligns.

Next, if you are not using a supported GPU (remembering that the list can be found here), you can see if the driver works by enabling support at the module level, but your mileage may vary — skip this step if you are using a supported GPU:

sed -i '/NVreg_OpenRmEnableUnsupportedGpus/s/^#//g' /etc/modprobe.d/50-nvidia-default.conf

Now that you have installed these packages, it is time to exit the transactional-update session:

exit

Make sure that you have exited the transactional-update session before proceeding.

Now that you have installed the drivers, it is time to reboot. As SLE Micro is an immutable operating system, it needs to reboot into the new snapshot that you created in a previous step. The drivers are only installed into this new snapshot, hence it is not possible to load the drivers without rebooting into this new snapshot, which happens automatically. Issue the reboot command when you are ready:

reboot

Once the system has rebooted successfully, log back in and use the nvidia-smi tool to verify that the driver is loaded successfully and that it can both access and enumerate your GPUs:

nvidia-smi

The output of this command should show you something similar to the following output, noting that in the example below, we have two GPUs:

+---------------------------------------------------------------------------------------+ | NVIDIA-SMI 545.29.06 Driver Version: 545.29.06 CUDA Version: 12.3 | |-----------------------------------------+----------------------+----------------------+ | GPU Name Persistence-M | Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap | Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |=========================================+======================+======================| | 0 NVIDIA A100-PCIE-40GB Off | 00000000:17:00.0 Off | 0 | | N/A 29C P0 35W / 250W | 4MiB / 40960MiB | 0% Default | | | | Disabled | +-----------------------------------------+----------------------+----------------------+ | 1 NVIDIA A100-PCIE-40GB Off | 00000000:CA:00.0 Off | 0 | | N/A 30C P0 33W / 250W | 4MiB / 40960MiB | 0% Default | | | | Disabled | +-----------------------------------------+----------------------+----------------------+ +---------------------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=======================================================================================| | No running processes found | +---------------------------------------------------------------------------------------+

This concludes the installation and verification process for the NVIDIA drivers on your SLE Micro system.

26.4 Further validation of the manual installation #

At this stage, all we have been able to verify is that, at the host level, the NVIDIA device can be accessed and that the drivers are loading successfully. However, if we want to be sure that it is functioning, a simple test would be to validate that the GPU can take instructions from a user-space application, ideally via a container, and through the CUDA library, as that is typically what a real workload would use. For this, we can make a further modification to the host OS by installing the nvidia-container-toolkit (NVIDIA Container Toolkit). First, open another transactional-update shell, noting that we could have done this in a single transaction in the previous step, and see how to do this fully automated in a later section:

transactional-update shell

Next, install the nvidia-container-toolkit package from the NVIDIA Container Toolkit repo:

The

nvidia-container-toolkit.repobelow contains a stable (nvidia-container-toolkit) and an experimental (nvidia-container-toolkit-experimental) repository. The stable repository is recommended for production use. The experimental repository is disabled by default.

zypper ar https://nvidia.github.io/libnvidia-container/stable/rpm/nvidia-container-toolkit.repo zypper --gpg-auto-import-keys install -y nvidia-container-toolkit

When you are ready, you can exit the transactional-update shell:

exit

…and reboot the machine into the new snapshot:

reboot

As before, you need to ensure that you have exited the transactional-shell and rebooted the machine for your changes to be enacted.

With the machine rebooted, you can verify that the system can successfully enumerate the devices using the NVIDIA Container Toolkit. The output should be verbose, with INFO and WARN messages, but no ERROR messages:

nvidia-ctk cdi generate --output=/etc/cdi/nvidia.yaml

This ensures that any container started on the machine can employ NVIDIA GPU devices that have been discovered. When ready, you can then run a podman-based container. Doing this via podman gives us a good way of validating access to the NVIDIA device from within a container, which should give confidence for doing the same with Kubernetes at a later stage. Give podman access to the labeled NVIDIA devices that were taken care of by the previous command, based on SLE BCI, and simply run the Bash command:

podman run --rm --device nvidia.com/gpu=all --security-opt=label=disable -it registry.suse.com/bci/bci-base:latest bash

You will now execute commands from within a temporary podman container. It does not have access to your underlying system and is ephemeral, so whatever we do here will not persist, and you should not be able to break anything on the underlying host. As we are now in a container, we can install the required CUDA libraries, again checking the correct CUDA version for your driver here, although the previous output of nvidia-smi should show the required CUDA version. In the example below, we are installing CUDA 12.3 and pulling many examples, demos and development kits so you can fully validate the GPU:

zypper ar https://developer.download.nvidia.com/compute/cuda/repos/sles15/x86_64/ cuda-suse zypper in -y cuda-libraries-devel-12-3 cuda-minimal-build-12-3 cuda-demo-suite-12-3

Once this has been installed successfully, do not exit the container. We will run the deviceQuery CUDA example, which comprehensively validates GPU access via CUDA, and from within the container itself:

/usr/local/cuda-12/extras/demo_suite/deviceQuery

If successful, you should see output that shows similar to the following, noting the Result = PASS message at the end of the command, and noting that in the output below, the system correctly identifies two GPUs, whereas your environment may only have one:

/usr/local/cuda-12/extras/demo_suite/deviceQuery Starting...

CUDA Device Query (Runtime API) version (CUDART static linking)

Detected 2 CUDA Capable device(s)

Device 0: "NVIDIA A100-PCIE-40GB"

CUDA Driver Version / Runtime Version 12.2 / 12.1

CUDA Capability Major/Minor version number: 8.0

Total amount of global memory: 40339 MBytes (42298834944 bytes)

(108) Multiprocessors, ( 64) CUDA Cores/MP: 6912 CUDA Cores

GPU Max Clock rate: 1410 MHz (1.41 GHz)

Memory Clock rate: 1215 Mhz

Memory Bus Width: 5120-bit

L2 Cache Size: 41943040 bytes

Maximum Texture Dimension Size (x,y,z) 1D=(131072), 2D=(131072, 65536), 3D=(16384, 16384, 16384)

Maximum Layered 1D Texture Size, (num) layers 1D=(32768), 2048 layers

Maximum Layered 2D Texture Size, (num) layers 2D=(32768, 32768), 2048 layers

Total amount of constant memory: 65536 bytes

Total amount of shared memory per block: 49152 bytes

Total number of registers available per block: 65536

Warp size: 32

Maximum number of threads per multiprocessor: 2048

Maximum number of threads per block: 1024

Max dimension size of a thread block (x,y,z): (1024, 1024, 64)

Max dimension size of a grid size (x,y,z): (2147483647, 65535, 65535)

Maximum memory pitch: 2147483647 bytes

Texture alignment: 512 bytes

Concurrent copy and kernel execution: Yes with 3 copy engine(s)

Run time limit on kernels: No

Integrated GPU sharing Host Memory: No

Support host page-locked memory mapping: Yes

Alignment requirement for Surfaces: Yes

Device has ECC support: Enabled

Device supports Unified Addressing (UVA): Yes

Device supports Compute Preemption: Yes

Supports Cooperative Kernel Launch: Yes

Supports MultiDevice Co-op Kernel Launch: Yes

Device PCI Domain ID / Bus ID / location ID: 0 / 23 / 0

Compute Mode:

< Default (multiple host threads can use ::cudaSetDevice() with device simultaneously) >

Device 1: <snip to reduce output for multiple devices>

< Default (multiple host threads can use ::cudaSetDevice() with device simultaneously) >

> Peer access from NVIDIA A100-PCIE-40GB (GPU0) -> NVIDIA A100-PCIE-40GB (GPU1) : Yes

> Peer access from NVIDIA A100-PCIE-40GB (GPU1) -> NVIDIA A100-PCIE-40GB (GPU0) : Yes

deviceQuery, CUDA Driver = CUDART, CUDA Driver Version = 12.3, CUDA Runtime Version = 12.3, NumDevs = 2, Device0 = NVIDIA A100-PCIE-40GB, Device1 = NVIDIA A100-PCIE-40GB

Result = PASSFrom here, you can continue to run any other CUDA workload — use compilers and any other aspect of the CUDA ecosystem to run further tests. When done, you can exit from the container, noting that whatever you have installed in there is ephemeral (so will be lost!), and has not impacted the underlying operating system:

exit

26.5 Implementation with Kubernetes #

Now that we have proven the installation and use of the NVIDIA open-driver on SLE Micro, let us explore configuring Kubernetes on the same machine. This guide does not walk you through deploying Kubernetes, but it assumes that you have installed K3s or RKE2 and that your kubeconfig is configured accordingly, so that standard kubectl commands can be executed as the superuser. We assume that your node forms a single-node cluster, although the core steps should be similar for multi-node clusters. First, ensure that your kubectl access is working:

kubectl get nodes

This should show something similar to the following:

NAME STATUS ROLES AGE VERSION node0001 Ready control-plane,etcd,master 13d v1.31.3+rke2r1

What you should find is that your k3s/rke2 installation has detected the NVIDIA Container Toolkit on the host and auto-configured the NVIDIA runtime integration into containerd (the Container Runtime Interface that k3s/rke2 use). Confirm this by checking the containerd config.toml file:

tail -n8 /var/lib/rancher/rke2/agent/etc/containerd/config.toml

This must show something akin to the following. The equivalent K3s location is /var/lib/rancher/k3s/agent/etc/containerd/config.toml:

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes."nvidia"] runtime_type = "io.containerd.runc.v2" [plugins."io.containerd.grpc.v1.cri".containerd.runtimes."nvidia".options] BinaryName = "/usr/bin/nvidia-container-runtime"

If these entries are not present, the detection might have failed. This could be due to the machine or the Kubernetes services not being restarted. Add these manually as above, if required.

Next, we need to configure the NVIDIA RuntimeClass as an additional Kubernetes runtime to the default, ensuring that any user requests for pods that need access to the GPU can use the NVIDIA Container Toolkit to do so, via the nvidia-container-runtime, as configured in the containerd configuration:

kubectl apply -f - <<EOF apiVersion: node.k8s.io/v1 kind: RuntimeClass metadata: name: nvidia handler: nvidia EOF

The next step is to configure the NVIDIA Device Plugin, which configures Kubernetes to leverage the NVIDIA GPUs as resources within the cluster that can be used, working in combination with the NVIDIA Container Toolkit. This tool initially detects all capabilities on the underlying host, including GPUs, drivers and other capabilities (such as GL) and then allows you to request GPU resources and consume them as part of your applications.

First, you need to add and update the Helm repository for the NVIDIA Device Plugin:

helm repo add nvdp https://nvidia.github.io/k8s-device-plugin helm repo update

Now you can install the NVIDIA Device Plugin:

helm upgrade -i nvdp nvdp/nvidia-device-plugin --namespace nvidia-device-plugin --create-namespace --version 0.14.5 --set runtimeClassName=nvidia

After a few minutes, you see a new pod running that will complete the detection on your available nodes and tag them with the number of GPUs that have been detected:

kubectl get pods -n nvidia-device-plugin

NAME READY STATUS RESTARTS AGE

nvdp-nvidia-device-plugin-jp697 1/1 Running 2 (12h ago) 6d3h

kubectl get node node0001 -o json | jq .status.capacity

{

"cpu": "128",

"ephemeral-storage": "466889732Ki",

"hugepages-1Gi": "0",

"hugepages-2Mi": "0",

"memory": "32545636Ki",

"nvidia.com/gpu": "1", <----

"pods": "110"

}Now you are ready to create an NVIDIA pod that attempts to use this GPU. Let us try with the CUDA Benchmark container:

kubectl apply -f - <<EOF

apiVersion: v1

kind: Pod

metadata:

name: nbody-gpu-benchmark

namespace: default

spec:

restartPolicy: OnFailure

runtimeClassName: nvidia

containers:

- name: cuda-container

image: nvcr.io/nvidia/k8s/cuda-sample:nbody

args: ["nbody", "-gpu", "-benchmark"]

resources:

limits:

nvidia.com/gpu: 1

env:

- name: NVIDIA_VISIBLE_DEVICES

value: all

- name: NVIDIA_DRIVER_CAPABILITIES

value: all

EOFIf all went well, you can look at the logs and see the benchmark information:

kubectl logs nbody-gpu-benchmark Run "nbody -benchmark [-numbodies=<numBodies>]" to measure performance. -fullscreen (run n-body simulation in fullscreen mode) -fp64 (use double precision floating point values for simulation) -hostmem (stores simulation data in host memory) -benchmark (run benchmark to measure performance) -numbodies=<N> (number of bodies (>= 1) to run in simulation) -device=<d> (where d=0,1,2.... for the CUDA device to use) -numdevices=<i> (where i=(number of CUDA devices > 0) to use for simulation) -compare (compares simulation results running once on the default GPU and once on the CPU) -cpu (run n-body simulation on the CPU) -tipsy=<file.bin> (load a tipsy model file for simulation) NOTE: The CUDA Samples are not meant for performance measurements. Results may vary when GPU Boost is enabled. > Windowed mode > Simulation data stored in video memory > Single precision floating point simulation > 1 Devices used for simulation GPU Device 0: "Turing" with compute capability 7.5 > Compute 7.5 CUDA device: [Tesla T4] 40960 bodies, total time for 10 iterations: 101.677 ms = 165.005 billion interactions per second = 3300.103 single-precision GFLOP/s at 20 flops per interaction

Finally, if your applications require OpenGL, you can install the required NVIDIA OpenGL libraries at the host level, and the NVIDIA Device Plugin and NVIDIA Container Toolkit can make them available to containers. To do this, install the package as follows:

transactional-update pkg install nvidia-gl-G06

You need to reboot to make this package available to your applications. The NVIDIA Device Plugin should automatically redetect this via the NVIDIA Container Toolkit.

26.6 Bringing it together via Edge Image Builder #

Okay, so you have demonstrated full functionality of your applications and GPUs on SLE Micro and you now want to use Chapter 10, Edge Image Builder to provide it all together via a deployable/consumable ISO or RAW disk image. This guide does not explain how to use Edge Image Builder, but it provides the necessary configurations to build such image. Below you can find an example of an image definition, along with the necessary Kubernetes configuration files, to ensure that all the required components are deployed out of the box. Here is the directory structure of the Edge Image Builder directory for the example shown below:

.

├── base-images

│ └── SL-Micro.x86_64-6.0-Base-SelfInstall-GM2.install.iso

├── eib-config-iso.yaml

├── kubernetes

│ ├── config

│ │ └── server.yaml

│ ├── helm

│ │ └── values

│ │ └── nvidia-device-plugin.yaml

│ └── manifests

│ └── nvidia-runtime-class.yaml

└── rpms

└── gpg-keys

└── nvidia-container-toolkit.keyLet us explore those files. First, here is a sample image definition for a single-node cluster running K3s that deploys the utilities and OpenGL packages, too (eib-config-iso.yaml):

apiVersion: 1.0

image:

arch: x86_64

imageType: iso

baseImage: SL-Micro.x86_64-6.0-Base-SelfInstall-GM2.install.iso

outputImageName: deployimage.iso

operatingSystem:

time:

timezone: Europe/London

ntp:

pools:

- 2.suse.pool.ntp.org

isoConfiguration:

installDevice: /dev/sda

users:

- username: root

encryptedPassword: $6$XcQN1xkuQKjWEtQG$WbhV80rbveDLJDz1c93K5Ga9JDjt3mF.ZUnhYtsS7uE52FR8mmT8Cnii/JPeFk9jzQO6eapESYZesZHO9EslD1

packages:

packageList:

- nvidia-open-driver-G06-signed-kmp-default

- nvidia-compute-utils-G06

- nvidia-gl-G06

- nvidia-container-toolkit

additionalRepos:

- url: https://download.nvidia.com/suse/sle15sp6/

- url: https://nvidia.github.io/libnvidia-container/stable/rpm/x86_64

sccRegistrationCode: [snip]

kubernetes:

version: v1.31.3+k3s1

helm:

charts:

- name: nvidia-device-plugin

version: v0.14.5

installationNamespace: kube-system

targetNamespace: nvidia-device-plugin

createNamespace: true

valuesFile: nvidia-device-plugin.yaml

repositoryName: nvidia

repositories:

- name: nvidia

url: https://nvidia.github.io/k8s-device-pluginThis is just an example. You may need to customize it to fit your requirements and expectations. Additionally, if using SLE Micro, you need to provide your own sccRegistrationCode to resolve package dependencies and pull the NVIDIA drivers.

Besides this, we need to add additional components, so they get loaded by Kubernetes at boot time. The EIB directory needs a kubernetes directory first, with subdirectories for the configuration, Helm chart values and any additional manifests required:

mkdir -p kubernetes/config kubernetes/helm/values kubernetes/manifests

Let us now set up the (optional) Kubernetes configuration by choosing a CNI (which defaults to Cilium if unselected) and enabling SELinux:

cat << EOF > kubernetes/config/server.yaml cni: cilium selinux: true EOF

Now ensure that the NVIDIA RuntimeClass is created on the Kubernetes cluster:

cat << EOF > kubernetes/manifests/nvidia-runtime-class.yaml apiVersion: node.k8s.io/v1 kind: RuntimeClass metadata: name: nvidia handler: nvidia EOF

We use the built-in Helm Controller to deploy the NVIDIA Device Plugin through Kubernetes itself. Let’s provide the runtime class in the values file for the chart:

cat << EOF > kubernetes/helm/values/nvidia-device-plugin.yaml runtimeClassName: nvidia EOF

We need to grab the NVIDIA Container Toolkit RPM public key before proceeding:

mkdir -p rpms/gpg-keys curl -o rpms/gpg-keys/nvidia-container-toolkit.key https://nvidia.github.io/libnvidia-container/gpgkey

All the required artifacts, including Kubernetes binary, container images, Helm charts (and any referenced images), will be automatically air-gapped, meaning that the systems at deploy time should require no Internet connectivity by default. Now you need only to grab the SLE Micro ISO from the SUSE Downloads Page (and place it in the base-images directory), and you can call the Edge Image Builder tool to generate the ISO for you. To complete the example, here is the command that was used to build the image:

podman run --rm --privileged -it -v /path/to/eib-files/:/eib \ registry.suse.com/edge/3.2/edge-image-builder:1.1.0 \ build --definition-file eib-config-iso.yaml

For further instructions, please see the documentation for Edge Image Builder.

26.7 Resolving issues #

26.7.1 nvidia-smi does not find the GPU #

Check the kernel messages using dmesg. If this indicates that it cannot allocate NvKMSKapDevice, apply the unsupported GPU workaround:

sed -i '/NVreg_OpenRmEnableUnsupportedGpus/s/^#//g' /etc/modprobe.d/50-nvidia-default.conf

NOTE: You will need to reload the kernel module, or reboot, if you change the kernel module configuration in the above step for it to take effect.